AWS and Cerebras Partner to Turbocharge AI Inference with Disaggregated Architecture

Amazon Web Services and Cerebras Systems announced on Friday a collaboration to bring what they describe as the fastest AI inference available to the cloud through a novel "disaggregated inference" architecture.

How It Works

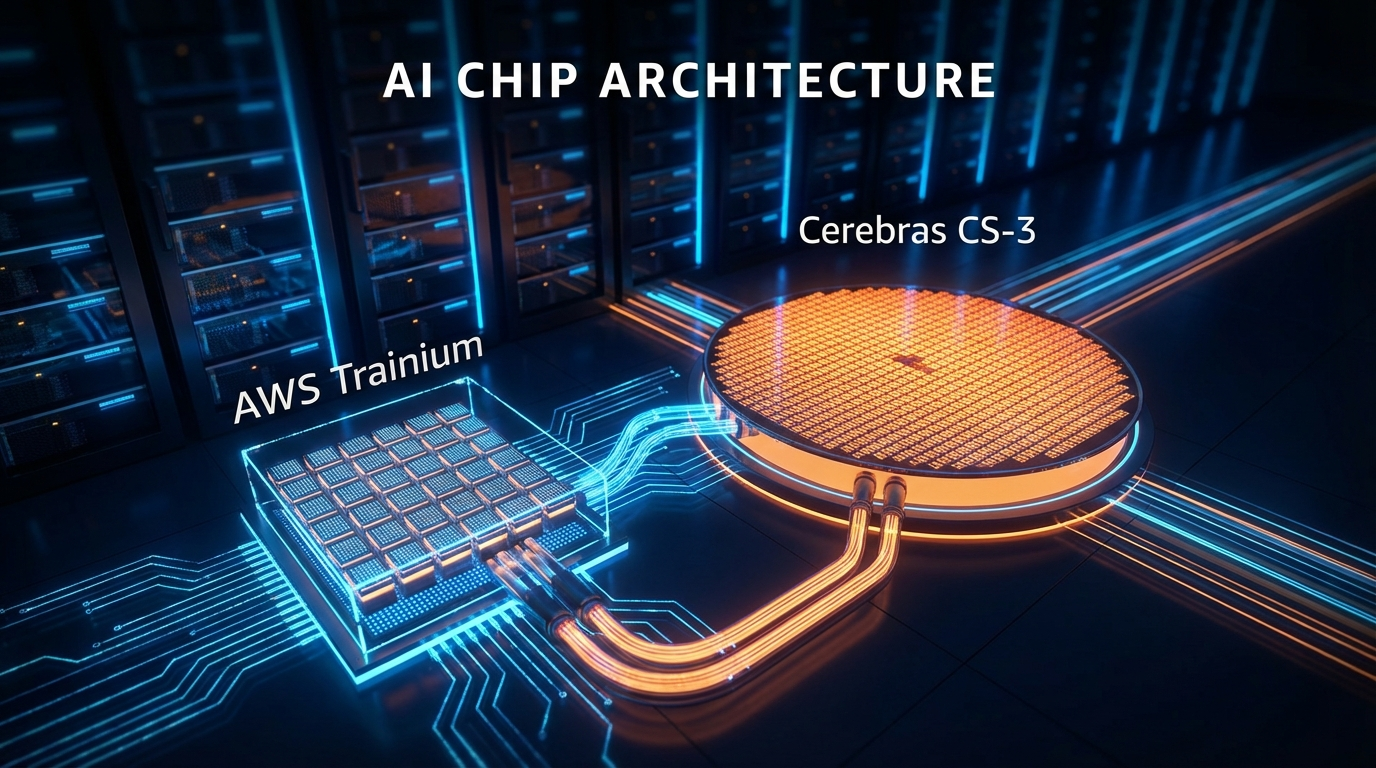

The approach splits AI inference into two distinct stages — prefill and decode — and hands each to the chip best suited for it. AWS Trainium handles the prefill phase, which is computationally intensive and parallelizable. Cerebras CS-3 takes on the decode phase, where tokens must be generated one at a time and memory bandwidth is the bottleneck.

The two systems are connected inside AWS data centers using Amazon's Elastic Fabric Adapter (EFA) networking. The setup will be accessible through Amazon Bedrock, making AWS the first cloud provider to offer Cerebras' disaggregated inference solution.

Why It Matters

Decode — the token-by-token output generation — typically dominates inference time in modern AI workloads, especially as reasoning models generate more tokens per request. Cerebras claims its CS-3 chip delivers thousands of times more memory bandwidth than the fastest GPU, making it purpose-built for this bottleneck.

AWS's David Brown said the goal is inference "an order of magnitude faster" than what is currently available. AWS also plans to offer open-source LLMs and Amazon Nova models via Cerebras hardware later this year.

OpenAI, Cognition, and Mistral already use Cerebras for production workloads. Anthropic and OpenAI are both committed Trainium customers.

The new Bedrock service is expected to launch within the next couple of months.