Z.ai Launches GLM-5-Turbo: Faster Agent Model That Drops Open Source

Z.ai, the Chinese AI startup behind the open-source GLM family of large language models, has launched GLM-5-Turbo — a faster, proprietary variant of GLM-5 engineered for enterprise agent workflows. The release marks a notable departure from the company's open-source roots.

Built for Execution, Not Chat

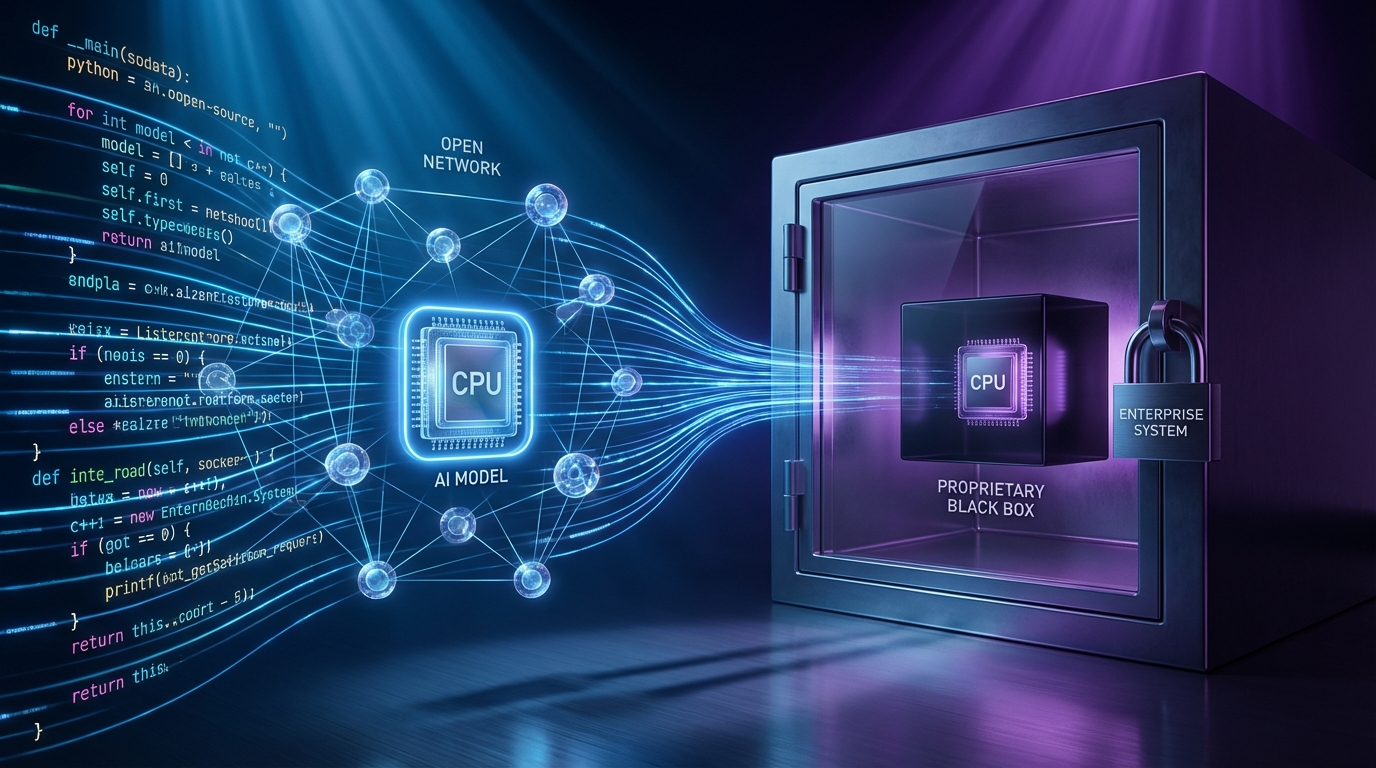

Unlike the flagship GLM-5 — released under a permissive open-source license — GLM-5-Turbo is closed-source. Z.ai has optimized it for the kind of sustained, multi-step work that agentic frameworks demand: complex instruction decomposition, tool invocation, scheduled execution, and long-chain task completion.

The model delivers a 202.8K-token context window with 131.1K max output, 48 tokens per second throughput, and a 0.67% tool error rate. It's now available on OpenRouter, priced at $0.96 per million input tokens and $3.20 per million output — marginally cheaper than GLM-5 at $1.00/$3.20.

The Open-to-Closed Pivot

Z.ai says insights from GLM-5-Turbo will eventually feed back into future open-source releases, but the model itself stays closed. The company is also folding the model into its GLM Coding subscription tiers (ranging from $27 to $216 per quarter), with Pro subscribers getting access in March and Lite subscribers following in April.

The move mirrors a pattern appearing across the AI industry: open-weight models generate developer adoption, while proprietary "turbo" variants capture enterprise value. As agentic AI use cases shift from experimental to production, more labs are choosing to monetize execution-layer improvements rather than open-source them outright.

Whether Z.ai's next open-source release will inherit Turbo's gains — and when — remains to be seen.