DeepMind Proposes Scientific Framework to Measure AGI — and Launches $200K Hackathon to Test It

How would we even know if AGI arrived? Google DeepMind thinks the industry needs a better answer to that question — and on March 17 it published a paper trying to build one.

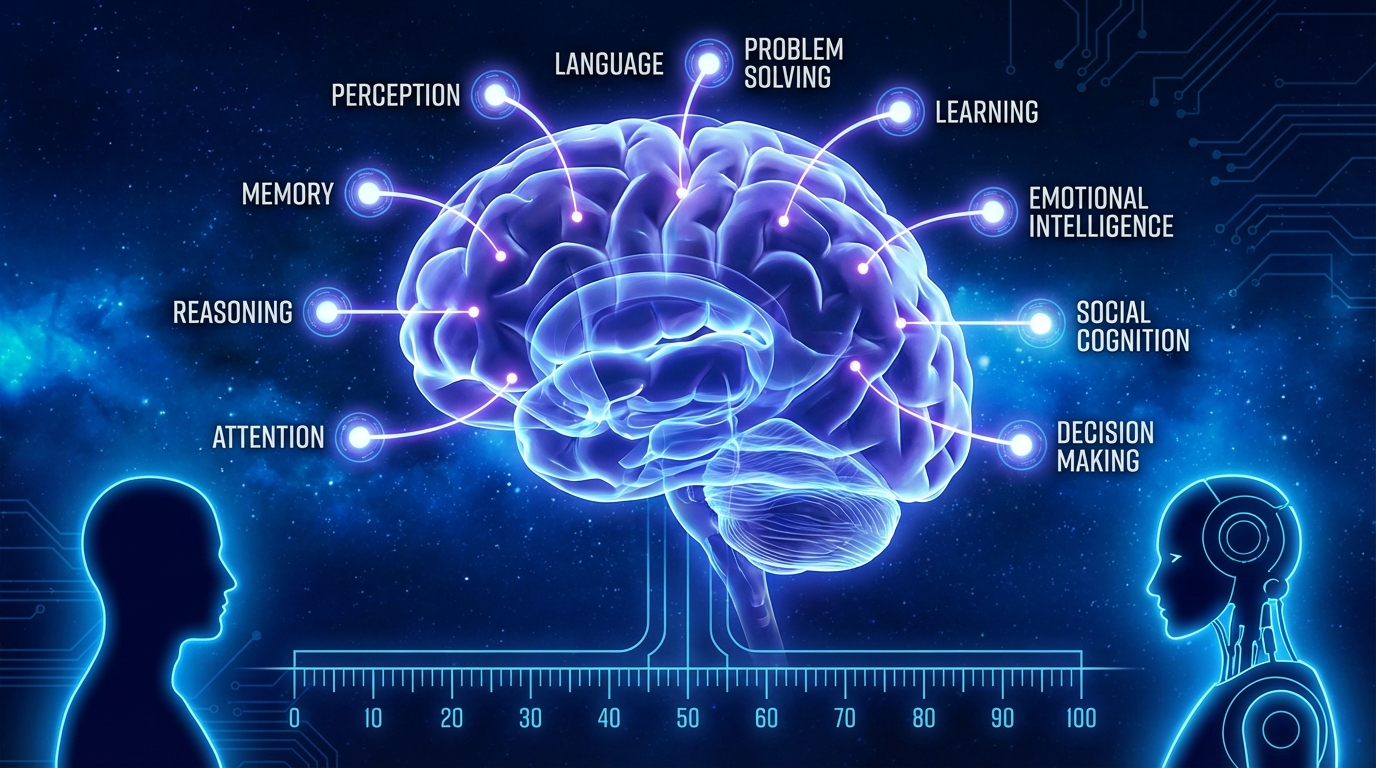

The paper, Measuring Progress Toward AGI: A Cognitive Taxonomy, lays out a framework of 10 cognitive abilities drawn from decades of psychology and neuroscience research: perception, generation, attention, learning, memory, reasoning, metacognition, executive functions, problem solving, and social cognition. DeepMind researchers Ryan Burnell and Oran Kelly argue these 10 areas collectively define general intelligence, and that progress toward AGI should be measured against all of them — not just benchmark games or narrow task performance.

The Evaluation Gap

The proposed method is straightforward in principle: run AI models and representative human samples through the same cognitive tasks, then map AI performance against the distribution of human scores in each area.

The researchers acknowledge that five of the ten abilities currently lack good evaluations entirely — learning, metacognition, attention, executive functions, and social cognition. That's where the community comes in.

$200K Kaggle Hackathon

DeepMind partnered with Kaggle to launch a hackathon inviting researchers and developers to design evaluations for those five underserved areas. The prize pool is $200,000: four overall winners take home $25,000 each, while the top two submissions in each of the five categories earn $10,000 per team.

The contest uses Kaggle's new Community Benchmarks platform, allowing public submissions to be evaluated and compared in the open.

Why It Matters

AGI remains a contested and loosely defined concept — OpenAI, Anthropic, and DeepMind itself all use the term differently. By grounding the definition in cognitive science and proposing standardized measurement protocols, DeepMind is trying to turn AGI from a marketing term into something that can actually be tracked empirically.