Google's TurboQuant Cuts AI Memory Usage 6x With Zero Accuracy Loss

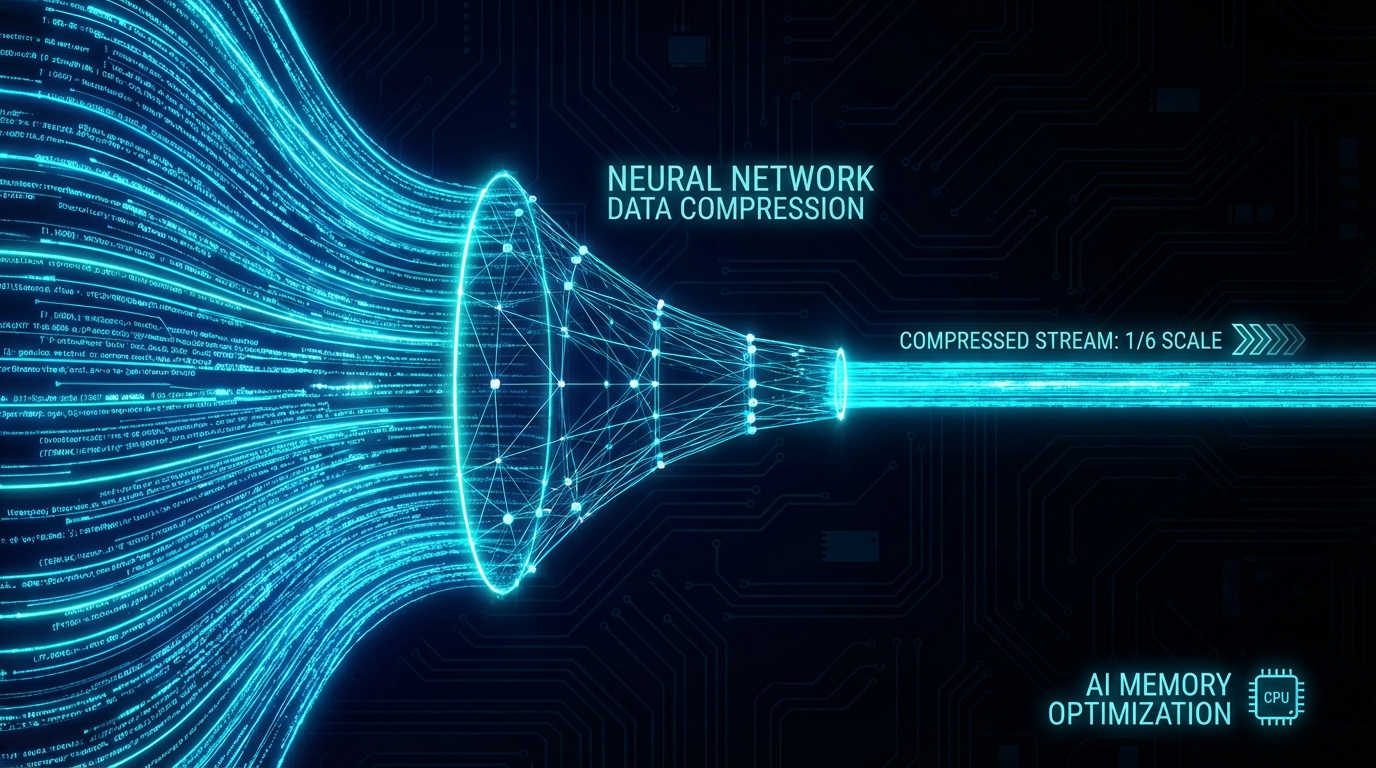

Google Research has published TurboQuant, a new compression algorithm designed to slash the memory demands of large language models. In testing, it achieves at least a 6x reduction in memory usage with zero accuracy loss — a significant improvement over traditional vector quantization methods.

The Problem It Solves

LLMs rely heavily on a key-value (KV) cache to speed up inference. As context windows grow, this cache becomes a major memory bottleneck. Existing quantization techniques can reduce memory, but they typically require storing extra "quantization constants" that add 1-2 bits of overhead per number — partially canceling out the savings.

How TurboQuant Works

TurboQuant combines two new techniques:

- PolarQuant — converts high-dimensional vectors from Cartesian to polar coordinates, eliminating the need for per-block normalization constants entirely. The geometry becomes predictable, allowing high-quality compression with no overhead.

- QJL (Quantized Johnson-Lindenstrauss) — uses a 1-bit residual error correction step based on the Johnson-Lindenstrauss transform. It corrects bias from the first compression stage with essentially zero memory cost.

Together they deliver compression that is both more aggressive and more accurate than previous methods.

Results

Google evaluated TurboQuant on open-source LLMs including Gemma and Mistral across standard long-context benchmarks (LongBench, RULER, Needle In A Haystack). It achieved top scores in dot product distortion and recall while minimizing memory usage. The research is scheduled to be presented at ICLR 2026.

For anyone running inference at scale — or trying to extend context windows without buying more hardware — TurboQuant offers a concrete, practical path forward.