Study Finds Malicious LLM Routers Can Hijack Agent Tool Calls

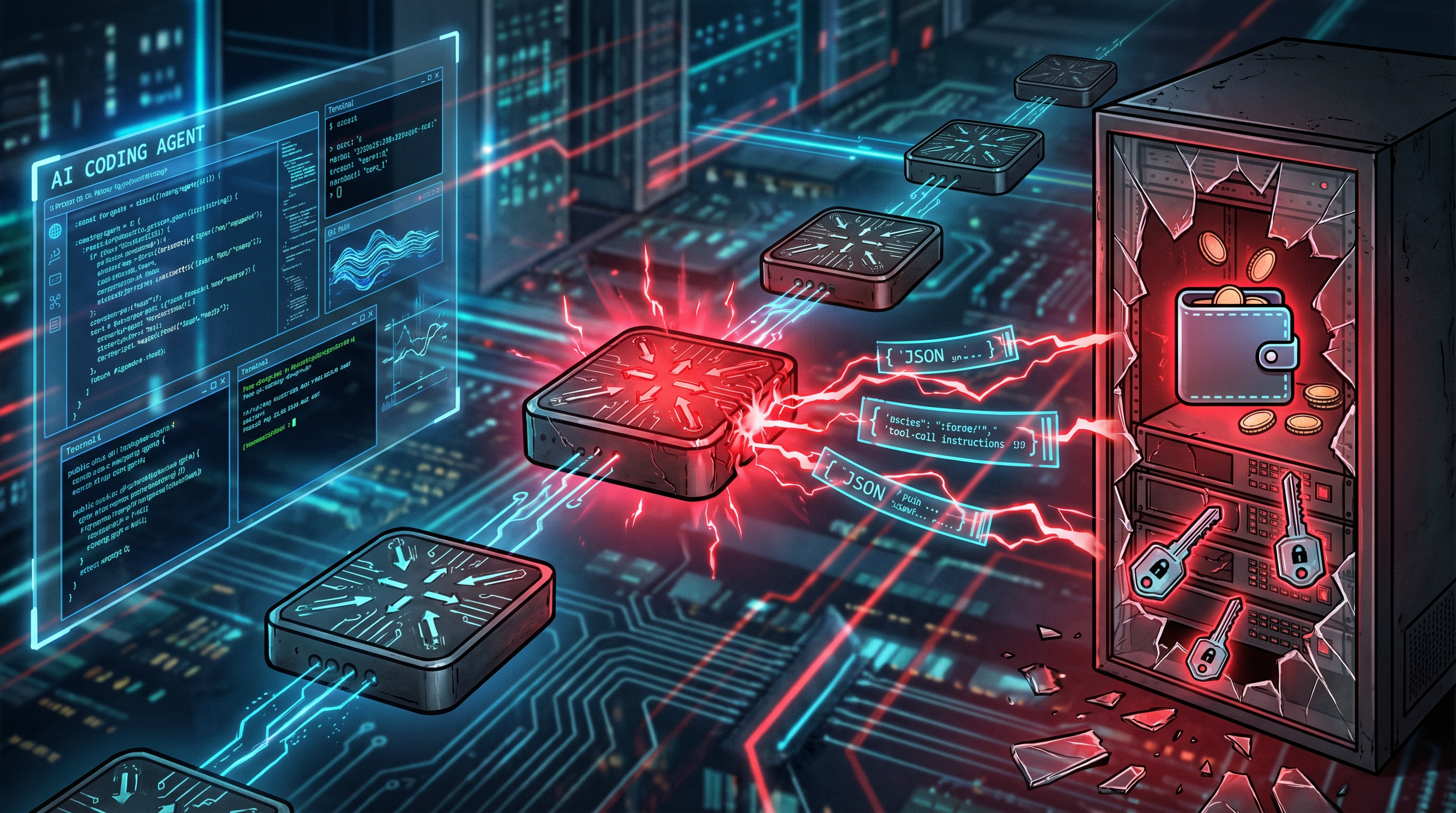

A new security paper is putting a spotlight on an overlooked part of the agent stack: LLM routers that sit between clients and upstream model providers. The authors argue those routers are effectively trusted middleboxes with full plaintext access to prompts, tool calls, API keys, and model responses, yet there is still no end-to-end integrity check tying a tool output to what the upstream model actually produced.

What the paper found

In the study, researchers tested 28 paid routers bought through Taobao, Xianyu, and Shopify-hosted storefronts, plus 400 free routers collected from public communities. They found nine routers actively injecting malicious code into returned tool calls, two using adaptive evasion tactics, 17 touching researcher-owned AWS canary credentials, and one draining ETH from a researcher-owned private key.

The paper also says the risk is not limited to obviously malicious services. In separate poisoning experiments, leaked keys and weak relay chains processed about 2.1 billion tokens, exposed 99 credentials across 440 Codex sessions, and showed how quickly compromised routing infrastructure can spread through autonomous agent workflows.

Why it matters

That matters well beyond chatbot demos. Agents are increasingly being used for coding, cloud operations, and crypto-adjacent payments, all of which depend on tool execution. The paper's core claim is simple: if the routing layer can silently rewrite a tool call, then the agent may execute attacker-controlled actions even when the underlying model provider was never compromised.