Google Brings MaxText SFT and RL Workflows to Single-Host TPUs

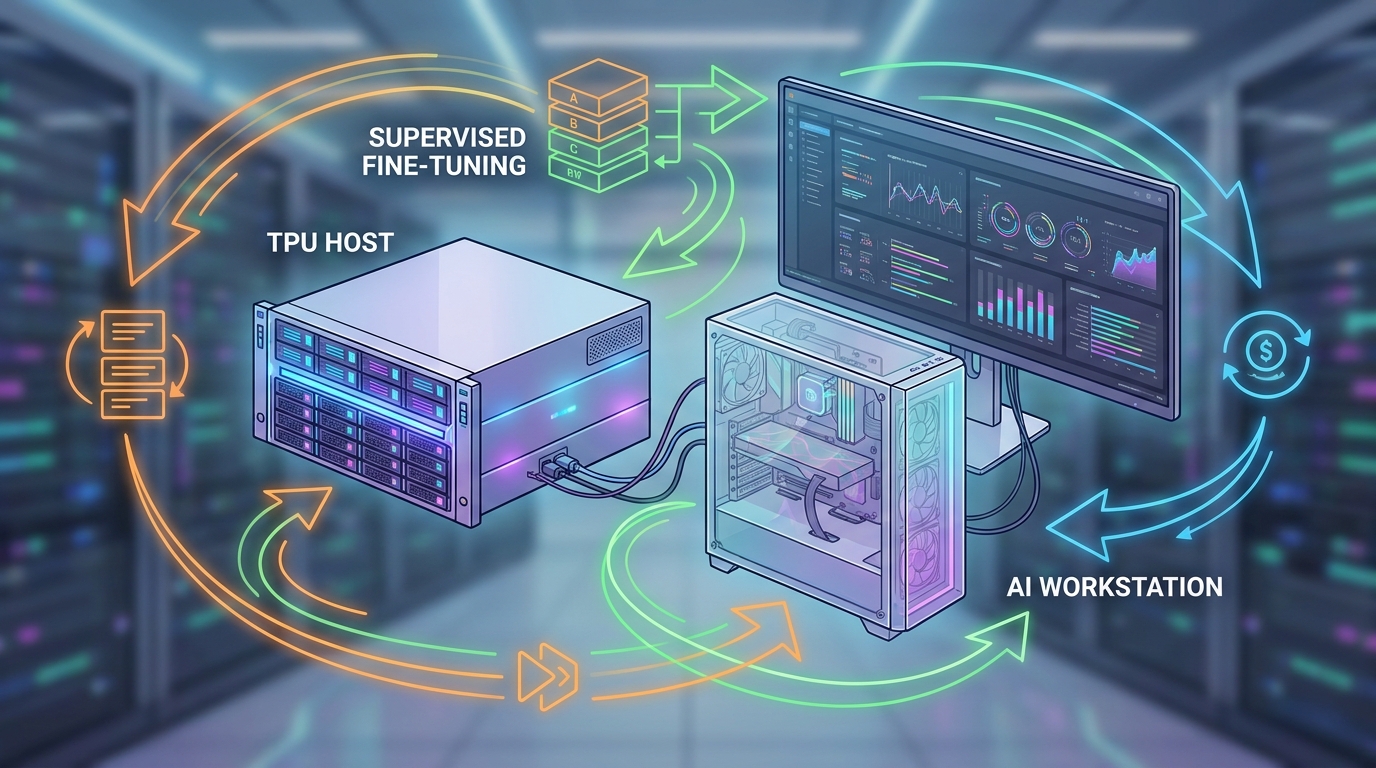

Google has expanded MaxText, its open-source JAX training stack for large language models, with new single-host TPU support for both supervised fine-tuning and reinforcement learning. The update matters because post-training has usually been easier to talk about than to reproduce, especially for smaller teams without access to large TPU clusters.

What shipped

According to Google's announcement and the linked MaxText docs, developers can now run SFT and RL workflows on a single TPU VM such as v5p-8 or v6e-8. The SFT path supports training on labeled Hugging Face datasets and can start from either an existing MaxText checkpoint or a converted Hugging Face checkpoint.

For reinforcement learning, Google documented support for GRPO and GSPO on single-host setups. The RL tutorial says MaxText uses vLLM for inference inside the training loop and evaluates runs against the GSM8K math benchmark before and after post-training. Google also points developers to a dedicated maxtext[tpu-post-train] install target for these workflows.

Why it matters

The conservative takeaway is not that post-training suddenly became cheap or simple. Teams still need TPU access, checkpoints, datasets, and enough expertise to manage the workflow. But Google has clearly lowered the entry point from multi-host infrastructure to something that fits on a single TPU host.

That makes MaxText more interesting as practical developer infrastructure, not just a large-scale reference project. For model teams already working in JAX and TPU environments, the change could make instruction tuning and reasoning-oriented RL experiments easier to test before scaling up.