Google Introduces TPU 8i and 8t as Specialized Eighth-Gen AI Chips

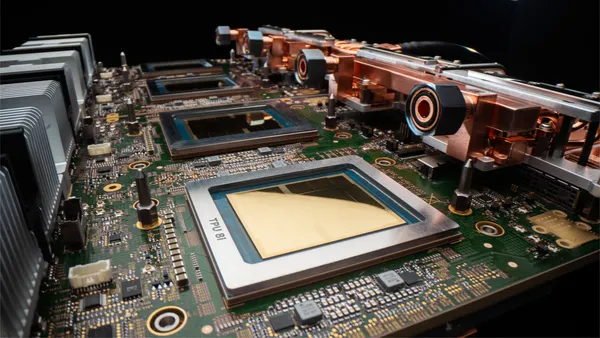

Google has unveiled TPU 8i and TPU 8t, framing the pair as the eighth generation of its Tensor Processing Unit lineup and a more specialized hardware stack for the next wave of AI workloads.

What Google announced

In its Cloud Next '26 announcement, Google said TPU 8i is designed for the fast response times needed by AI agents that have to reason, plan, and execute multi-step tasks without making users wait. TPU 8t, by contrast, is positioned as the training-focused part of the lineup, with Google saying it can run very large models on a single massive pool of memory.

That split matters because frontier AI infrastructure is increasingly being pulled in two directions at once: low-latency inference for interactive products, and ever-larger training jobs for foundation models. Rather than pitch one chip for everything, Google is now describing separate TPU roles for those workloads.

Why it matters

The announcement is still light on rollout detail, but the direction is clear. Google is tying future agent products to purpose-built silicon, networking, and datacenter infrastructure instead of treating the model as the whole product.

Google Cloud's release notes separately show support for 8th generation TPU devices, which gives a second official signal that the platform is being prepared around this new hardware tier. The conservative takeaway is that Google sees agent responsiveness and large-model training as distinct infrastructure problems, and it is designing its next TPU generation accordingly.